AI companies face unique hardware challenges, particularly when it comes to high-performance computing (HPC) and GPU-based systems. Central to this challenge is sourcing sufficient memory bandwidth to support the massive computational workloads associated with training large AI models.

HBM3 memory modules are key to achieving the full potential of the GPU among these components. It is crucial during this process to collaborate with a trusted supplier of H100 HBM3 memory parts to guarantee the supply stability, performance consistency, and cost efficiency.

Understanding Memory Requirements for AI GPU Systems

AI workloads, like large language models and computer vision systems, require massive amounts of memory bandwidth. GPUs in AI infrastructure need memory that supports fast parallel processing, wait-free memory access, and high bandwidth. HBM3, High Bandwidth Memory gen 3, is developed to fulfill these requirements. Its wide memory bus, stacked architecture and high-speed interface make it suitable for the next generation AI gpu system.

Getting hold of HBM3 modules is no easy feat.

Production capacity is tight and the market is frequently tight due to heavy demands from HPC, AI, and graphically intensive applications. For businesses running AI workloads, it’s important to also know where to get these vital parts — and choosing an H100 HBM3 memory components distributor is a strategic decision.

Key Strategies for Sourcing HBM3 Memory

1. Partnering with Specialized Distributors

The initial step for the AI firms is to find the distributors which deal with the high performance memory parts. A reliable H100 HBM3 memory parts supplier has a good relationship with the manufacturer and in many cases have reserved allocations or early access for new memory technologies. These vendors can help ensure AI projects stay on track, even in times of high demand.

For enterprises seeking more information on sourcing strategies, a manufacturer page provides general guidance on how distributors handle high-performance memory allocation and availability. These resources can help companies navigate lead times and volume requirements.

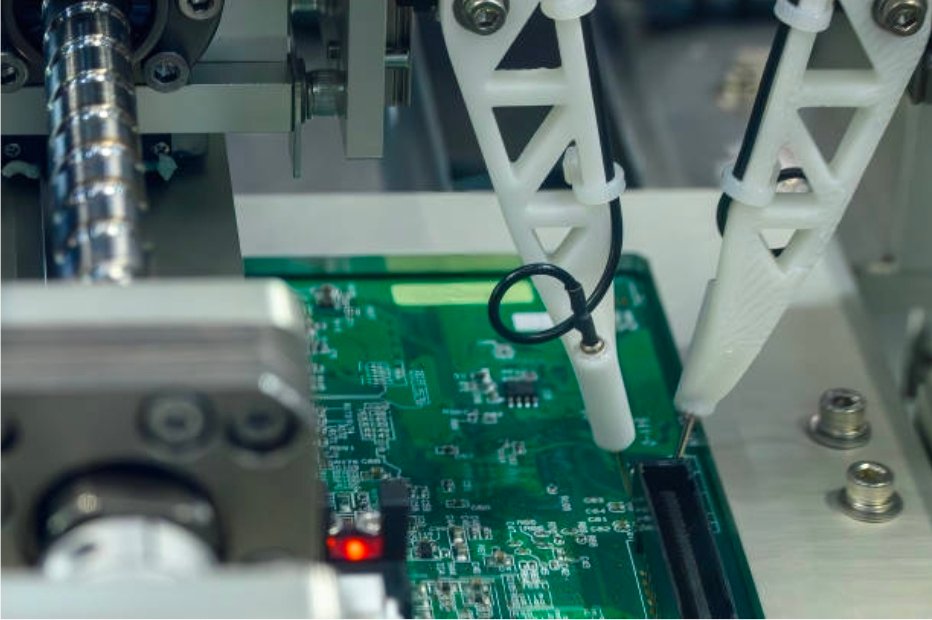

2. Leveraging Authorized Distribution Networks

The HBM3 memory module from authorized distributors is guaranteed to be genuine and meets all the specifications required. AI companies in search of dependable H100 HBM3 memory parts providers may be rest assured on an authorized network that will deliver premium quality modules along with warranty support, while meeting required performance levels. Working with these distributors ensures lowest risk with your high-stakes AI projects where a failed component can bring the servers down or, worse, lead to erroneous calculations.

3. Exploring Global Sourcing Options

Given the limited manufacturing capacity for HBM3, companies often benefit from distributors with a global presence. A H100 HBM3 memory components distributor with international networks can source modules from multiple regions, reducing the impact of localized shortages or logistics delays. This global reach allows AI companies to maintain flexibility in procurement and optimize inventory across multiple GPU systems.

4. Pre-Planning and Forecasting

The demand for HBM3 memory is tight and supply delays can push out AI projects. Those organizations that make a planning in advance and predict their memory needs will be able to obtain allocations ahead of the shortage becoming more severe. Partnering with a H100 HBM3 memory elements supplier that provides inventory visibility and predict availability can allow AI companies to stay one step ahead, keeping vital components at hand when required.

5. Supporting High-Performance Applications

AI systems rely on memory not only for capacity but also for performance and reliability. High-bandwidth memory is essential for tasks such as deep learning model training, real-time inference, and large-scale simulations. An experienced H100 HBM3 memory components distributor provides modules optimized for high throughput, low latency, and multi-GPU configurations, supporting the performance requirements of cutting-edge AI workloads.

The Value of Distributor Partnerships

Independent and specialized distributors play a vital role in bridging the gap between memory manufacturers and AI companies. A well-chosen H100 HBM3 memory components distributor provides:

- Guaranteed access to scarce HBM3 modules

- Technical guidance for multi-GPU memory configurations

- Flexibility in order size and delivery timing

- Insight into market trends and component availability

For AI companies, these capabilities are essential for sustaining development timelines and meeting project goals. A manufacturer page often provides general information about distribution models and allocation priorities, helping enterprises plan their procurement strategies.

Conclusion

Obtaining HBM3 memory for AI gpu systems is a matter of life or death for performance, reliability and project schedules. Companies in AI need to make a H100 HBM3 memory components distributor choice to get the best HPC memory, to reduce supply related risk, and to maximize their HPC infrastructure investments. Using specialized distributors and authorized networks as well as global sourcing and predictive forecasting, AI companies can find the memory necessary to fuel next-generation computational workloads.

The partnership with a H100 HBM3 memory components distributor is more than a simple logistics concern—it is a foundational part of AI infrastructure planning. Dependable providers make sure AI systems remain working, projects continue on schedule, and performance is up to par. For those organizations that are trying to push the envelope of AI, obtaining HBM3 memory via trusted and legitimate avenues is key to maintaining a competitive edge in an ever more challenging domain.